Secure Input and Data Structuring

The pipeline begins with the ingestion of imaging data from various sources.

Support for DICOM and standard formats

Secure data upload and storage

Metadata extraction and indexing

Batch data ingestion

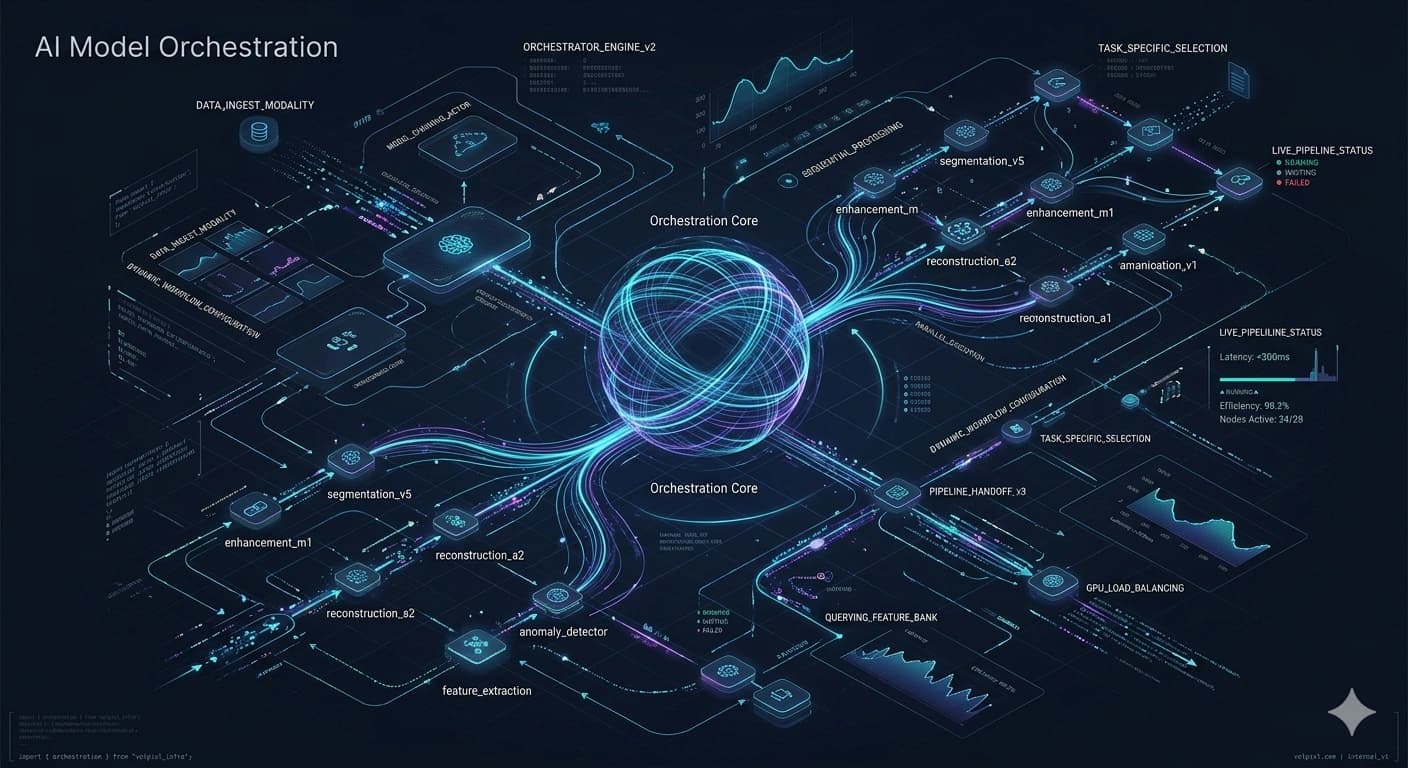

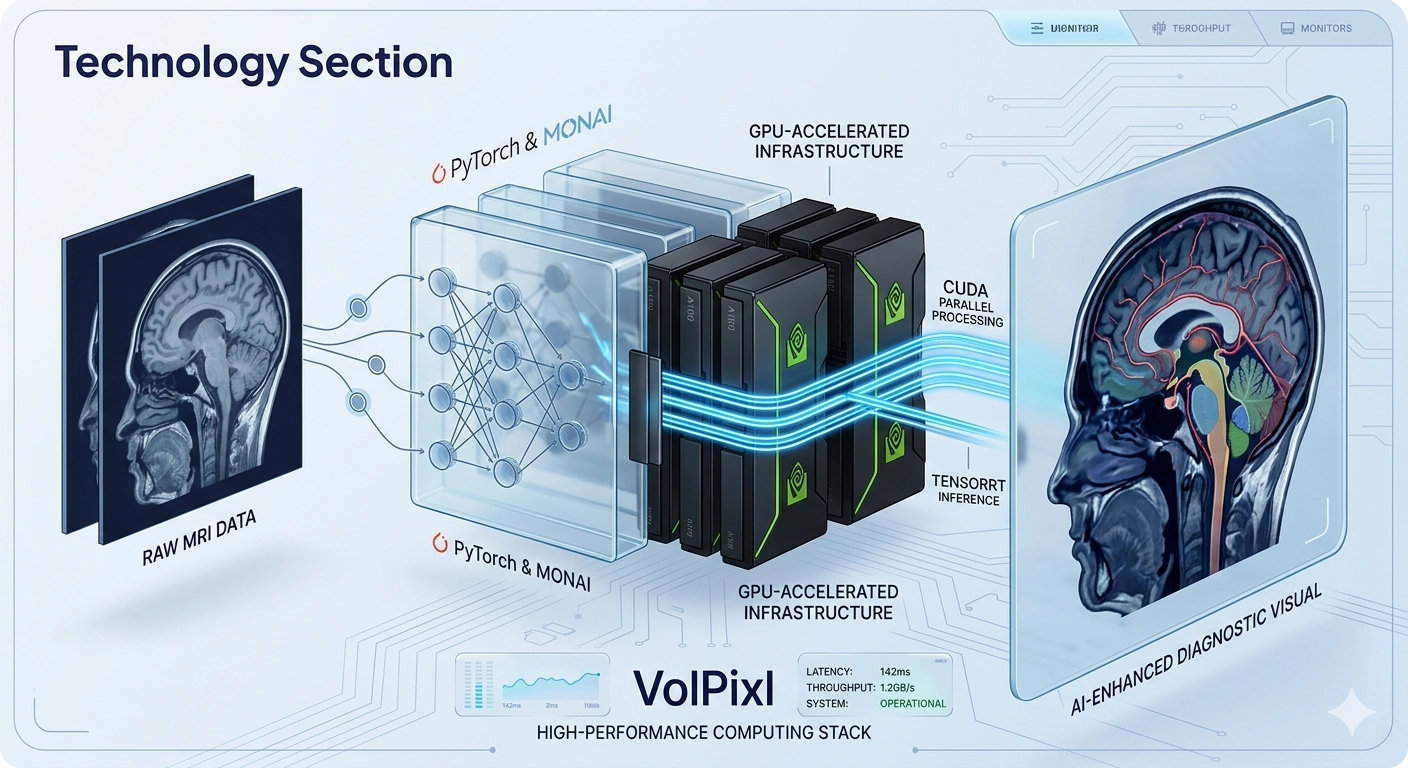

A structured, AI-powered pipeline that transforms raw medical imaging data into high-quality, visualization-ready outputs with speed, accuracy, and scalability.

6

Pipeline Stages

GPU

Acceleration Path

DICOM

Input Ready

API

Output Delivery

Structured Processing Across Ingestion, AI, Visualization, and Delivery

Medical imaging workflows involve multiple stages of data handling, processing, and transformation. Without a structured pipeline, these processes can become inefficient and inconsistent.

VolPixl's Image Processing Pipeline is designed to streamline the entire workflow, from data ingestion to final output generation.

By integrating AI models with optimized compute infrastructure, the pipeline ensures efficient processing, consistent output quality, and scalable performance.

Visualization-Ready

Modular processing keeps each stage flexible while maintaining a structured flow from source data to final output.

A clear route from secure imaging input to visualization-ready output keeps teams aligned and workloads predictable.

Secure input

Normalize data

Enhance and analyze

Refine outputs

Prepare rendering

Export and deliver

The pipeline is modular, allowing flexibility while maintaining a structured flow across all stages.

The pipeline begins with the ingestion of imaging data from various sources.

Support for DICOM and standard formats

Secure data upload and storage

Metadata extraction and indexing

Batch data ingestion

Preprocessing ensures that input data is normalized and optimized for AI processing.

Image normalization

Noise filtering

Resolution alignment

Data validation

AI models are applied to enhance and process imaging data.

Super-resolution enhancement

Noise reduction and denoising

Segmentation and ROI detection

Feature extraction

Post-processing ensures that outputs are consistent and ready for visualization.

Output smoothing

Artifact correction

Quality consistency checks

Format optimization

Processed data is structured for visualization engines.

Data formatting for 2D/3D rendering

Multi-view alignment

Resolution optimization

Final outputs are generated and delivered for use or integration.

High-resolution image export

API-based output delivery

Integration with external systems

Visualization-ready formats

The pipeline is designed for automation to reduce manual effort and improve efficiency.

Workflow scheduling

Batch processing

Task orchestration

Error handling and retry mechanisms

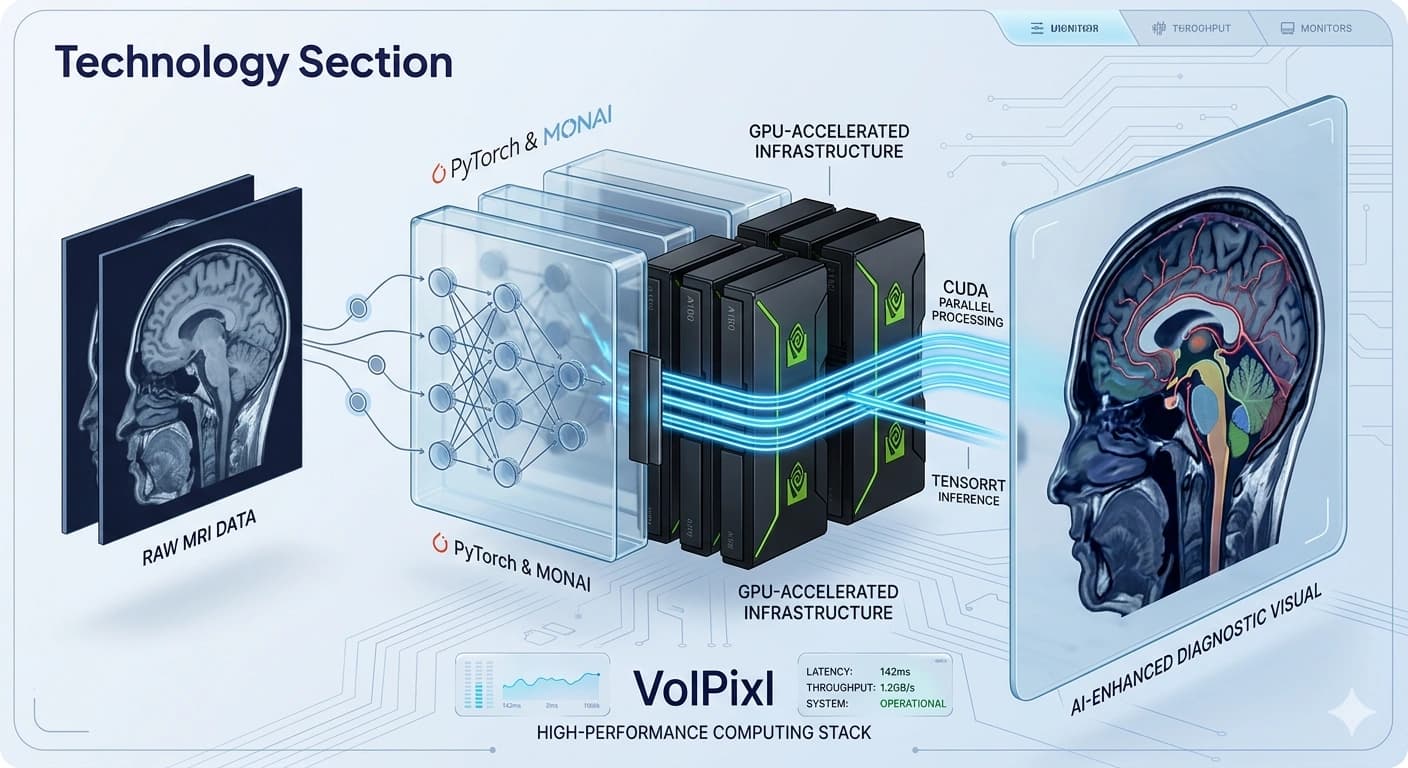

The pipeline incorporates multiple optimization techniques to ensure high performance.

GPU-accelerated processing

CUDA-based parallel computation

TensorRT-optimized inference

Efficient memory management

The pipeline supports scalable processing across large datasets.

Parallel processing pipelines

Multi-GPU support

Horizontal scaling

Load balancing

The pipeline integrates with existing imaging systems and workflows.

DICOM compatibility

API-based integration

Integration with PACS systems

Flexible deployment options

The same structured pipeline supports operational imaging workflows, enhancement, research, and application-level integration.

Efficiently process large datasets in hospitals and radiology centers.

Improve image quality using AI-driven processing.

Handle complex datasets for analysis and visualization.

Enable startups to integrate imaging pipelines via APIs.

A unified pipeline reduces manual work, improves consistency, and keeps imaging workloads ready for enterprise scale.

End-to-end automation

Faster processing times

Consistent output quality

Scalable for enterprise workloads

Seamless integration

Leverage a structured, high-performance pipeline to process and enhance medical imaging data efficiently.