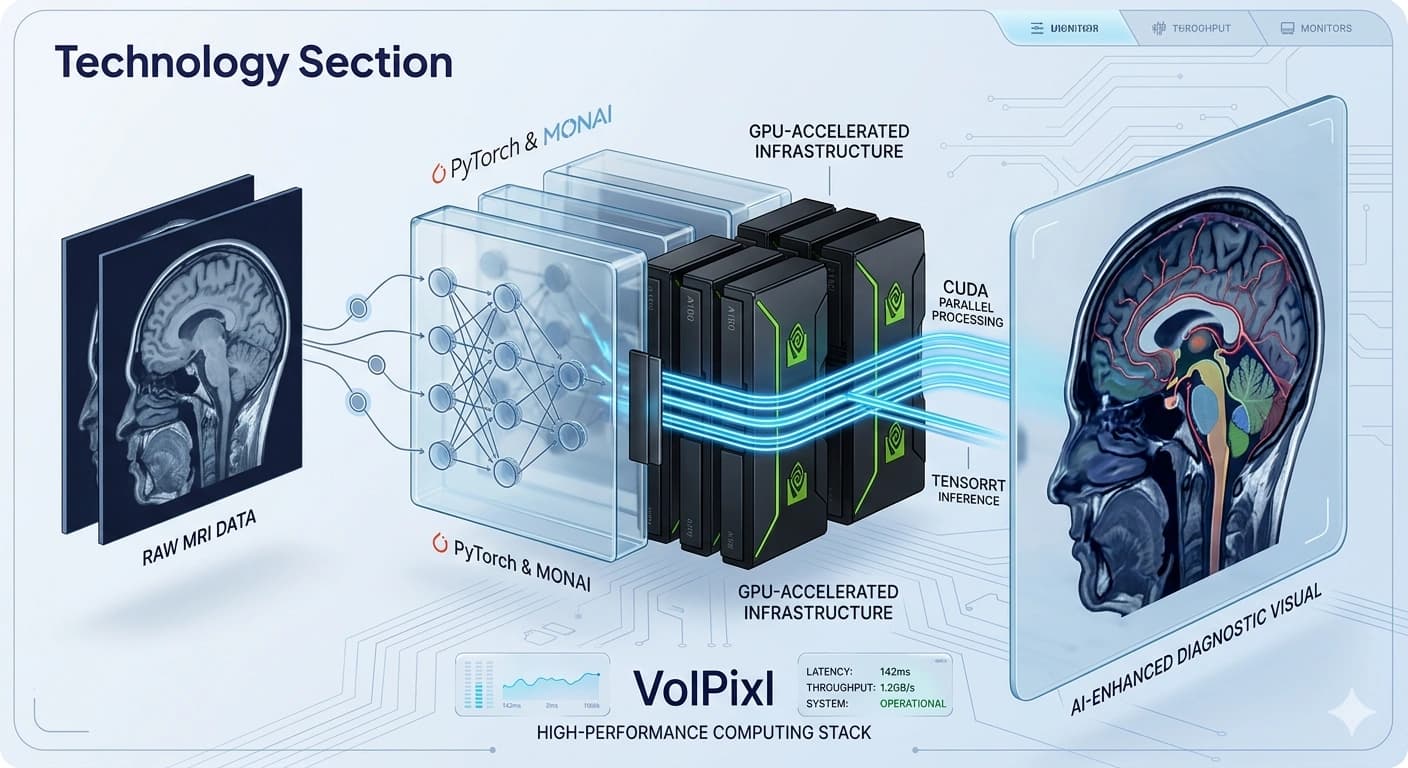

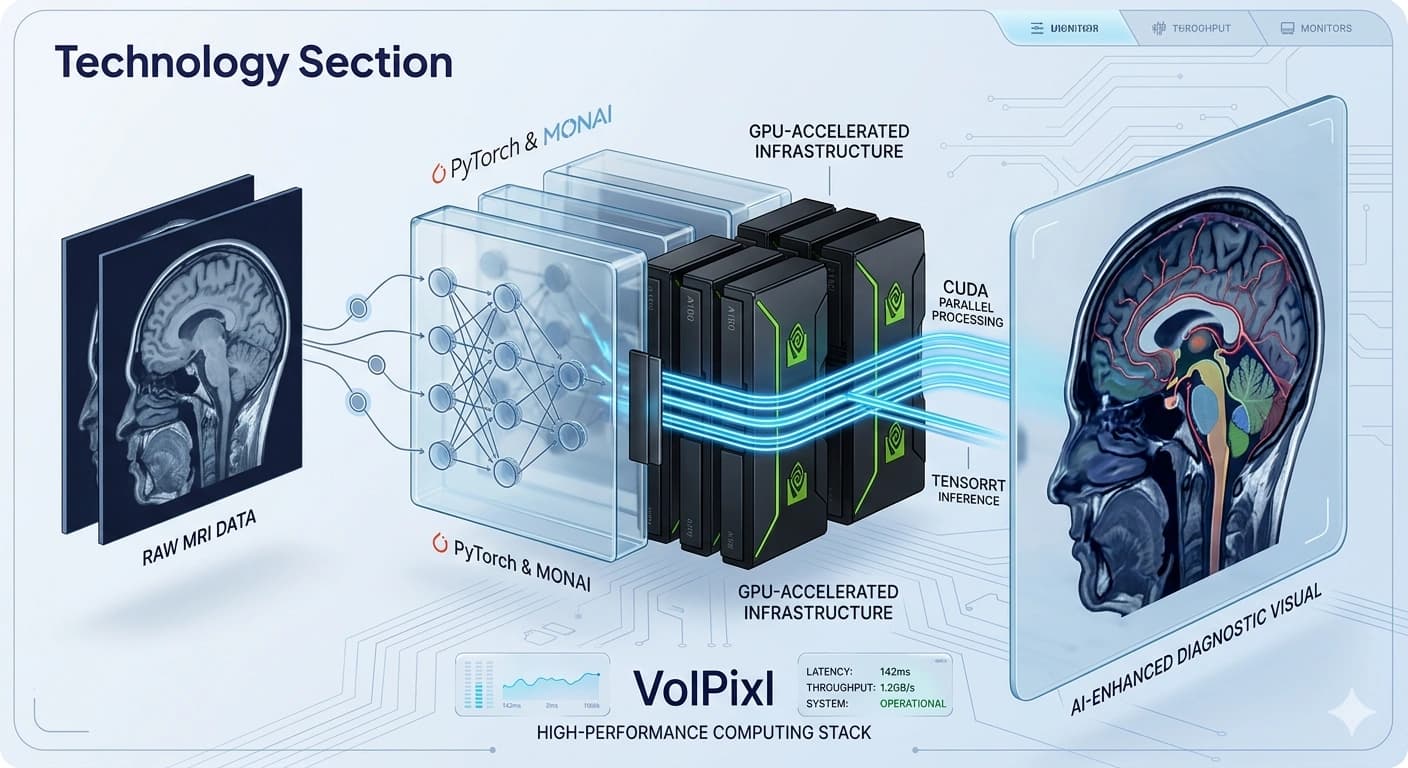

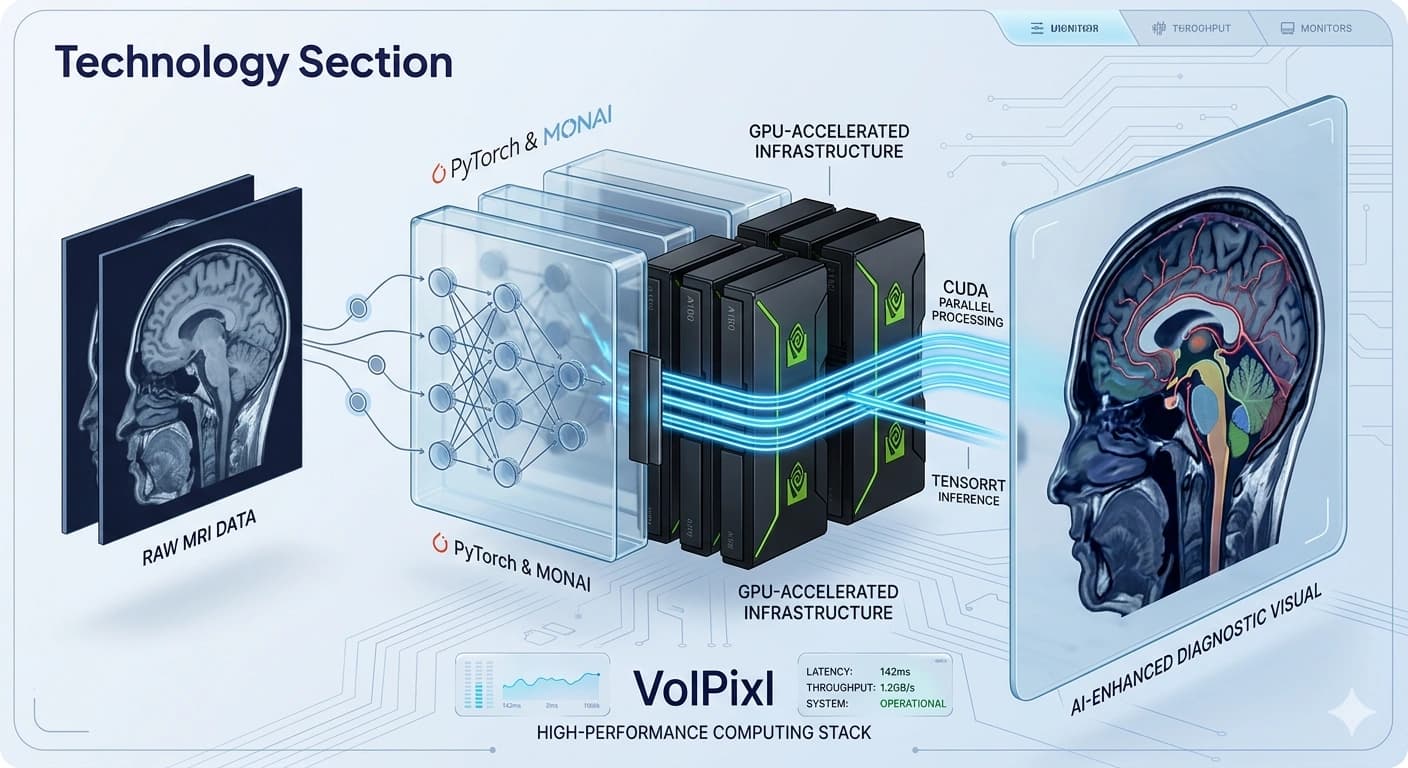

Scalable AI Architecture for Medical Imaging

A modular, high-performance AI architecture designed to process, enhance, and visualize complex medical imaging data with speed, efficiency, and reliability.

Layered

System Design

Unified

Data Flow

GPU

Execution Path

Low Latency

Output Delivery

Layered AI Coordination Across Data, Models, Inference, and Visualization

Modern medical imaging workflows require more than standalone AI models. They demand a cohesive architecture that integrates data processing, model execution, and visualization seamlessly.

VolPixl's AI architecture is built as a layered, modular system that orchestrates multiple AI components within a unified pipeline.

It ensures efficient data flow, optimized model performance, and scalable execution across different workloads while maintaining low latency and consistent output quality.

System Diagram

Modular layers coordinate data preparation, model execution, inference, and delivery.

Core Design Principles

The architecture is designed to remain flexible, fast, and interoperable as imaging workflows evolve across datasets, teams, and deployment environments.

Modularity

Each component of the AI system operates independently, enabling flexibility and easy updates.

Scalability

The architecture supports scaling across datasets, users, and compute resources.

Efficiency

Optimized pipelines ensure minimal latency and efficient resource utilization.

Interoperability

Designed to integrate with external systems and existing workflows.

Layered AI System Design

A visual architecture map shows how imaging data moves through the platform, from secure ingestion and preprocessing to model execution, visualization, and external delivery.

Ingest, index, and protect imaging data.

Data Layer

System Component

Handles ingestion, storage, and preparation of imaging data.

Capabilities

DICOM and standard format support

Metadata extraction

Data normalization

Secure storage and retrieval

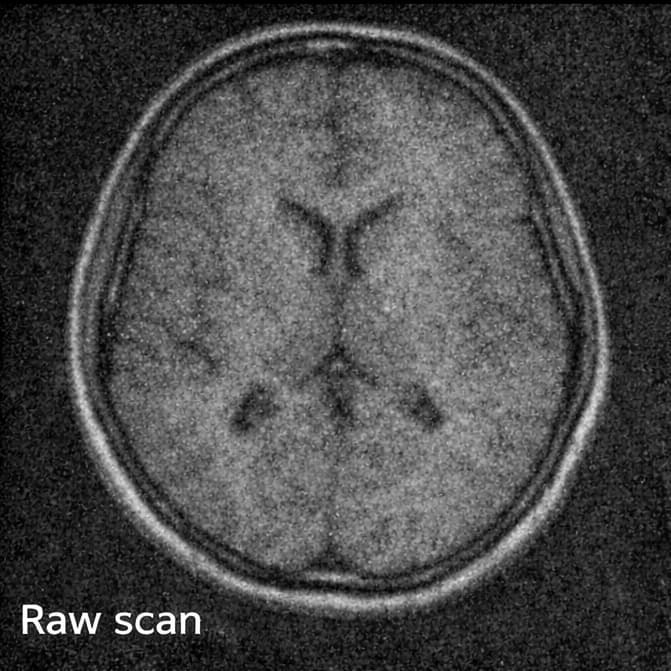

Clean and standardize inputs before model execution.

Preprocessing Layer

System Component

Prepares data for AI model execution.

Processes

Noise filtering

Resolution normalization

Data standardization

Input validation

Run task-specific AI models and deep learning pipelines.

Model Layer

System Component

Core AI processing layer where models are executed.

Components

Image enhancement models

Segmentation models

Feature extraction models

Reconstruction models

Characteristics

Optimized deep learning architectures

Task-specific model pipelines

Efficient batch processing

Optimize production execution for speed and throughput.

Inference Layer

System Component

Executes AI models efficiently in production environments.

Features

Tensor optimization engines

Low-latency inference pipelines

GPU-accelerated execution

Batch and real-time processing

Coordinate jobs, queues, resources, and recovery logic.

Orchestration Layer

System Component

Manages workflow execution and resource allocation.

Capabilities

Task scheduling

Workflow automation

Load balancing

Error handling and retries

Render processed outputs for review and interaction.

Visualization Layer

System Component

Transforms processed data into visual outputs.

Features

2D and 3D rendering

Interactive visualization

Multi-view support

Deliver outputs to clinical systems and external products.

Integration Layer

System Component

Connects the AI system with external applications.

Capabilities

API-based access

Integration with PACS and imaging systems

Data export and delivery

Dynamic Routing

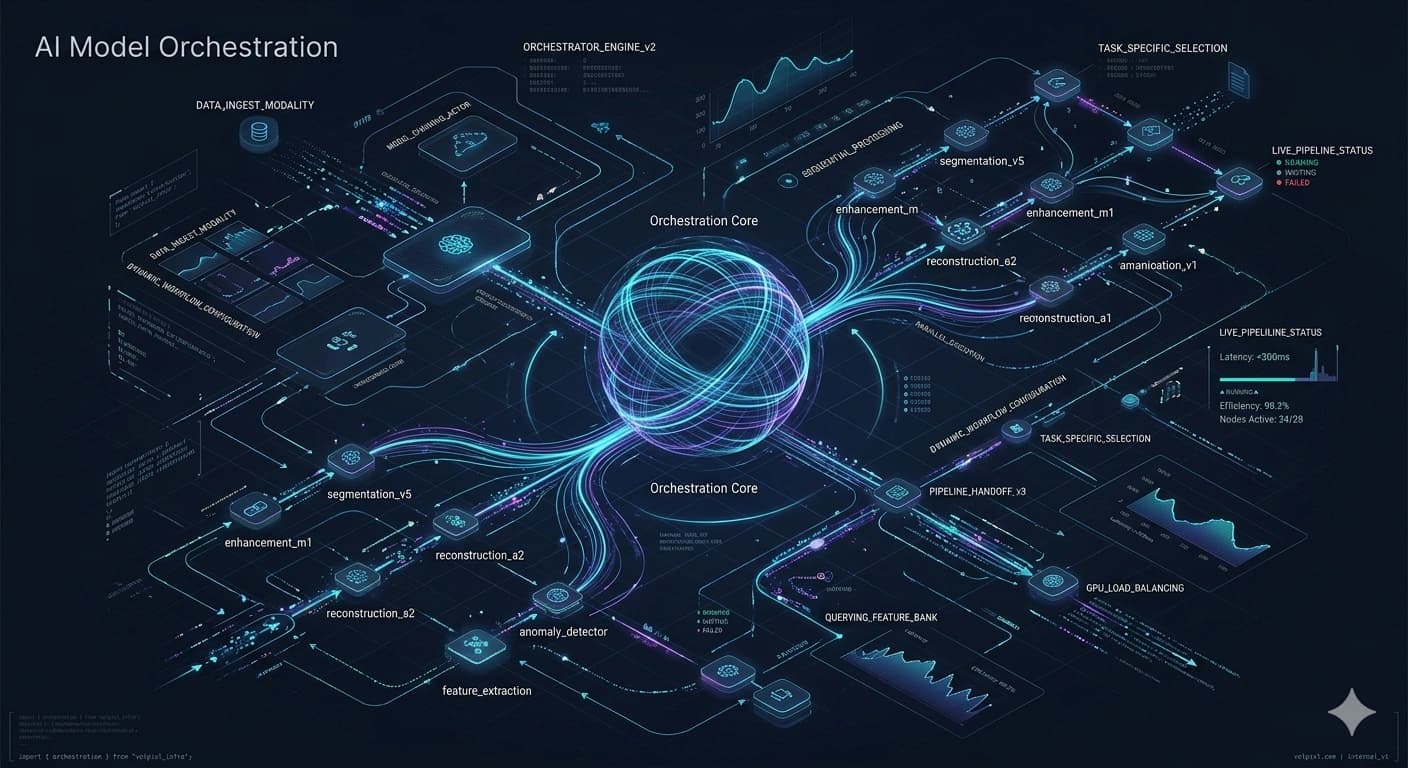

Multi-Model Pipeline Synchronization

Coordinated Execution of AI Models.

VolPixl orchestrates multiple specialized AI models within a unified workflow. By chaining neural layers, we ensure that every pixel is processed by the optimal specialized architecture for its modality.

Sequential & Parallel Execution

Pipeline-based Model Chaining

Task-specific Model Selection

Dynamic Workflow Configuration

End-to-End Processing Flow.

Our architecture ensures a continuous, high-bandwidth stream of data through optimized GPU-accelerated stages.

Input Data

DICOM / Volumes

Preprocessing

Denoise & Align

AI Models

Neural Processing

Inference

GPU Execution

Visualization

3D Rendering

Output

API / PACS Export

Continuous Flow

Automated stream synchronization

Minimal Latency

Near-zero overhead between stages

Optimized Transfer

DMA-assisted data movement

Performance Optimization

CUDA-based parallel computation

TensorRT-optimized inference

Memory optimization strategies

Batch processing pipelines

Scalability and Deployment

Deployment Options

Cloud-based deployment

Hybrid environments

Scalable compute clusters

Capabilities

Horizontal scaling

Multi-GPU support

Load balancing

Stable and Predictable

Outputs.

Our architecture is precision-engineered to ensure consistent performance across diverse datasets and heavy clinical workloads. We eliminate volatility so you can focus on the results.

Consistent Output Quality

Deterministic processing ensures the same input yields identical, high-fidelity results every time, regardless of load.

Robust Error Handling

Built-in redundancy and graceful degradation keep workflows moving even during edge-case data anomalies.

Stable Model Execution

Isolated execution environments prevent resource contention and ensure predictable latency for AI inference.

Secure AI Processing

Environment.

Encrypted Data Handling

End-to-end AES-256 encryption applied at the ingestion layer and maintained throughout the processing lifecycle.

Secure Access Control

Identity-based infrastructure ensuring only verified clinical personnel can interact with specific data clusters.

Privacy-First Design

Advanced de-identification protocols that scrub PHI before data enters the neural processing pipeline.

Clinical Integrity

Architected specifically for medical environments with full traceability and HIPAA-compliant data auditing.

Why VolPixl AI Architecture

The architecture is built to unify AI workflow execution while keeping performance, flexibility, and system integration strong across modern imaging environments.

End-to-end AI workflow integration

High-performance processing

Scalable and flexible design

Efficient resource utilization

Seamless system integration

Build on a Robust AI Architecture

Leverage a scalable and efficient AI architecture designed for modern medical imaging workflows.