Ingestion Performance

Fast data upload and indexing

Efficient metadata handling

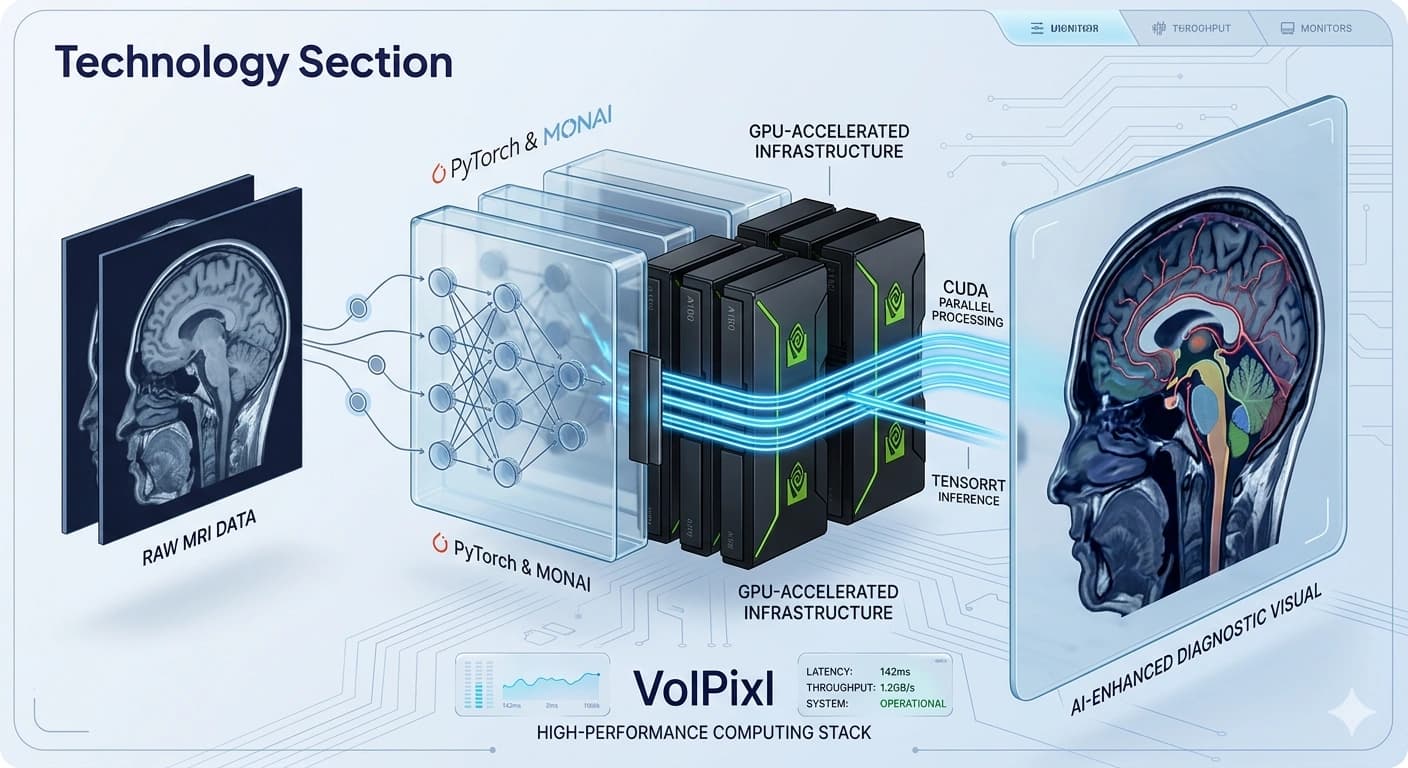

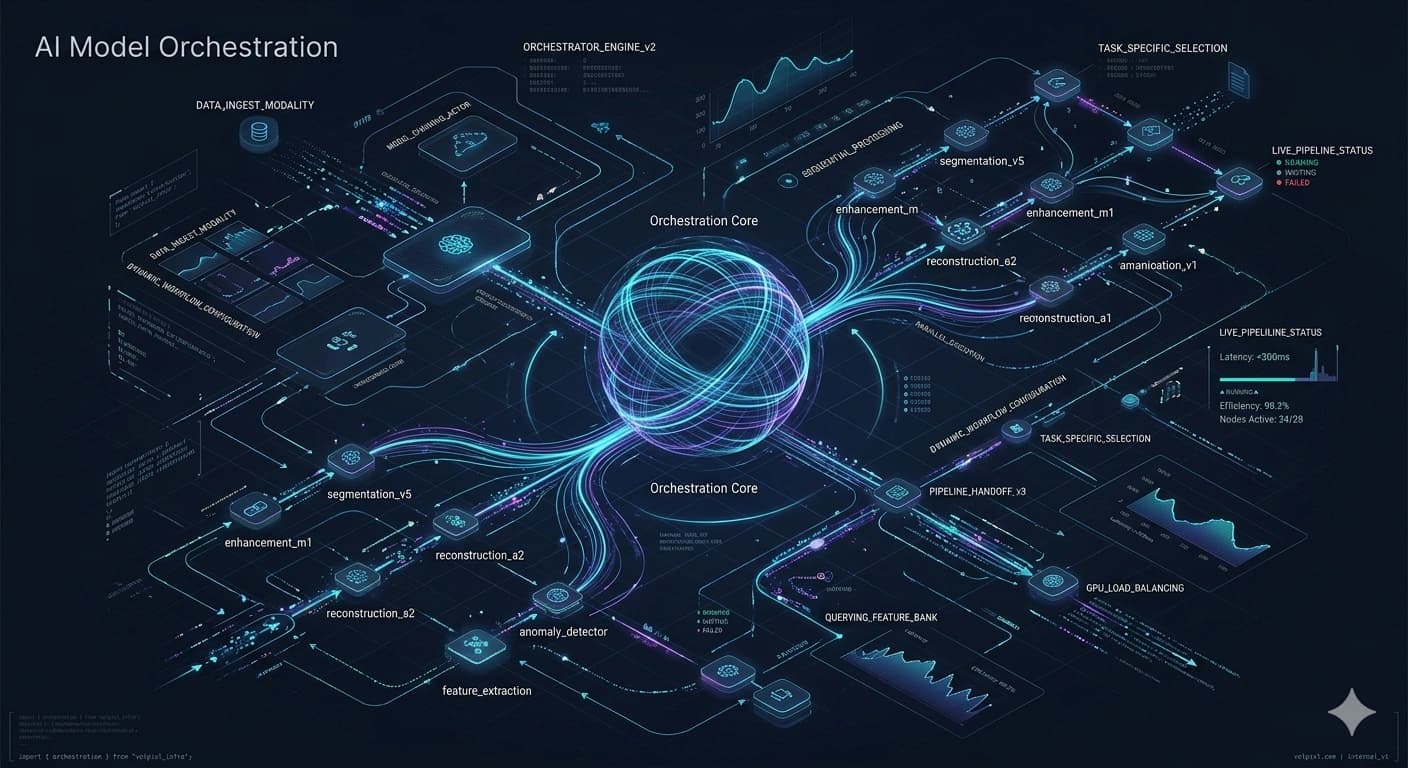

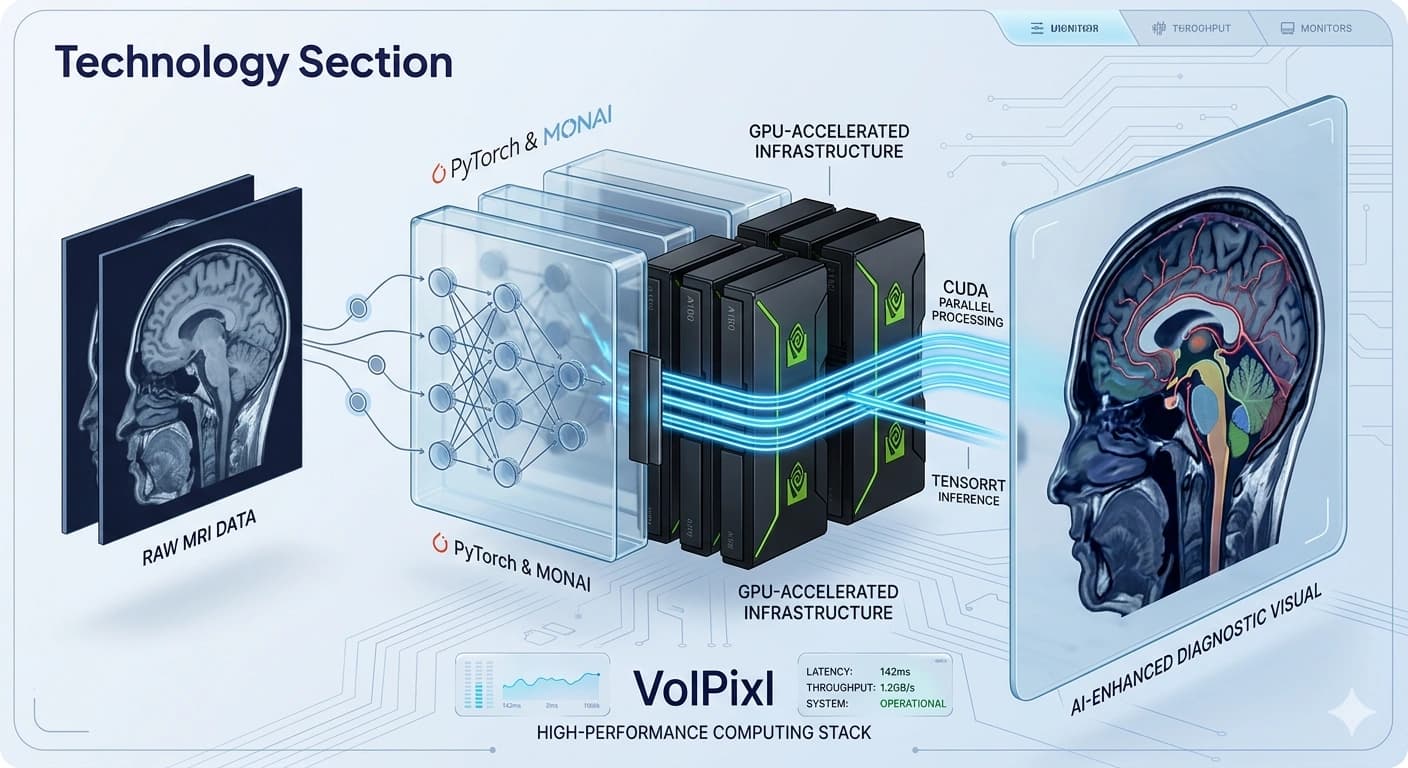

Optimized for speed, scalability, and efficiency, VolPixl delivers consistent performance across complex imaging workflows using advanced AI models and GPU-accelerated infrastructure.

Latency

Input to Output

Throughput

Dataset Volume

GPU

Inference Path

Scale

Workload Growth

Speed, Scalability, and Quality Across Demanding Imaging Workflows

Medical imaging workflows require the ability to process large datasets quickly while maintaining high output quality.

VolPixl is engineered to handle these demands through optimized AI pipelines and high-performance compute infrastructure.

The platform focuses on delivering consistent performance across different workloads, ensuring efficient processing, reduced latency, and scalable execution for both small and large-scale environments.

Controlled Evaluation

Latency, throughput, and resource usage are evaluated across realistic imaging workload patterns.

Performance is evaluated across speed, latency, throughput, and scale so teams can understand how the pipeline behaves under real imaging workloads.

Measures how quickly imaging data is processed through the pipeline.

Optimized for fast AI inference

Reduced processing time per image

Efficient batch processing

Time taken from input to output generation.

Low-latency pipelines

Near real-time processing capability

Optimized data flow between stages

Volume of data processed within a given time.

High-throughput processing pipelines

Parallel execution of tasks

Efficient handling of large datasets

Ability to handle increasing workloads.

Horizontal scaling across compute resources

Multi-GPU support

Consistent performance under load

Measuring performance under peak clinical loads to ensure zero-bottleneck execution.

VolPixl evaluates performance using controlled testing environments and real-world workload simulations. We look beyond raw speed to measure architectural stability.

Batch processing of imaging datasets

Evaluation across varying resolutions

Testing under high-concurrency loads

Real-time latency & throughput tracking

Precision-tuned strategies to ensure your workflow remains lightning fast and scalable.

Parallel computation for faster processing

Efficient handling of large-scale workloads

TensorRT-based model optimization

Reduced inference time

Efficient execution of AI models

Simultaneous processing of multiple images

Improved throughput

Reduced overhead

Efficient memory allocation

Reduced resource wastage

Faster data access

Performance improvements are applied across all stages of the pipeline, from ingestion through output generation.

Fast data upload and indexing

Efficient metadata handling

Accelerated AI model execution

Parallel processing pipelines

Smooth rendering performance

Responsive interaction with imaging data

Fast export and integration

Minimal delay in result delivery

VolPixl is designed to maintain surgical precision even as workloads increase. Our architecture avoids the "performance ceiling" common in legacy systems.

10ms

Peak Latency

∞

Data Ceiling

Performance scales predictably as compute resources are added, ensuring no diminishing returns.

Maintains sub-millisecond response times even under 10x standard clinical volume.

Dynamic traffic distribution prevents hot-spotting and ensures hardware longevity.

Efficient processing of large imaging datasets with minimal delays.

Handling complex datasets with consistent performance.

Low-latency APIs for real-time or near real-time processing.

VolPixl ensures reliable performance across different environments.

Stable processing times

Consistent output quality

Reliable system behavior

Performance optimization is an ongoing process.

Continuous model optimization

Infrastructure improvements

Monitoring and tuning of pipelines

Performance may vary depending on data size, system configuration, and workload characteristics. Benchmarks are indicative and based on controlled testing environments.

Use benchmark results as directional guidance and validate performance against your own modalities, deployment footprint, and workload profile.

Leverage optimized AI pipelines and GPU-accelerated infrastructure for fast, scalable medical imaging workflows.